Facial biometrics: How smartphones can recognize us

Mohamed Daoudi, IMT Lille Douai – Institut Mines-Télécom

[divider style=”normal” top=”20″ bottom=”20″]

[dropcap]W[/dropcap]elcome to the new era: that of facial biometrics. The launch of the iPhone X, a smartphone featuring Face ID facial recognition, demonstrated that this technology has now reached full maturity. This became possible with the introduction of miniature 3D sensors with high-level computing power, combined with extremely efficient learning algorithms such as deep learning.

But what is facial recognition? It means identifying that two faces are identical despite changes caused by lighting conditions, pose and facial expressions. Generally speaking, this means finding distances within the face that can be used to identify any changes to the face.

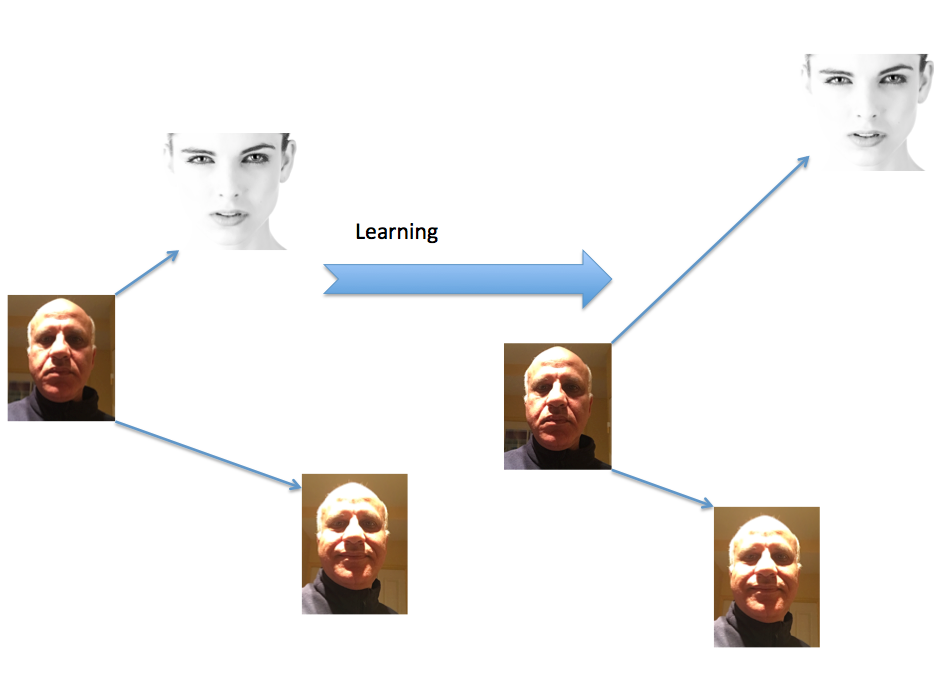

Figure showing the same face in different shooting conditions and lighting changes.

In 2014, researchers from Facebook published an article called “DeepFace: Closing the Gap to Human-Level Performance in Face Verification”. To prevent the problems caused by changes in pose, a step was introduced to align the 2D face to a 3D model of the face. The next step involved a deep learning process using a network of artificial neurons consisting of 120 million connections. The learning set was composed of 4.4 million faces of celebrities. The network of neurons was trained to recognize the variances in the faces. The algorithm made it possible to determine if two photographed faces belonged to the same person with a specified accuracy of 97.35%.

In 2015, researchers from Google published an article entitled “FaceNet: A Unified Embedding for Face Recognition and Clustering”. They showed that they were able to achieve a recognition rate of 99.63% using a database of 2D faces captured in an uncontrolled environment. To accomplish this, the authors proposed the use of a neural network consisting of eleven convolutional layers and three connected layers. The idea was to ensure that an image of a specific person would be closer to all the other images of that same person (referred to as positive) than to the images of other people (referred to as negative). The learning was carried out using a database of 200 million face images from 8 million people.

During the training, the learned similarities allowed the images showing the same faces to come closer together, and those showing different faces moved farther apart in relation to a specific metric.

However, the DeepFace and FaceNet experiments were both based on private databases that are not available to the scientific community. A team from the University of Oxford proposed to collect data from the web and has established a database of 2.6 million faces from 2,622 people and has proposed a network architecture called VGG-face consisting of 16 convolutional layers and 3 fully connected layers. Today this architecture is widely used by the computer vision community.

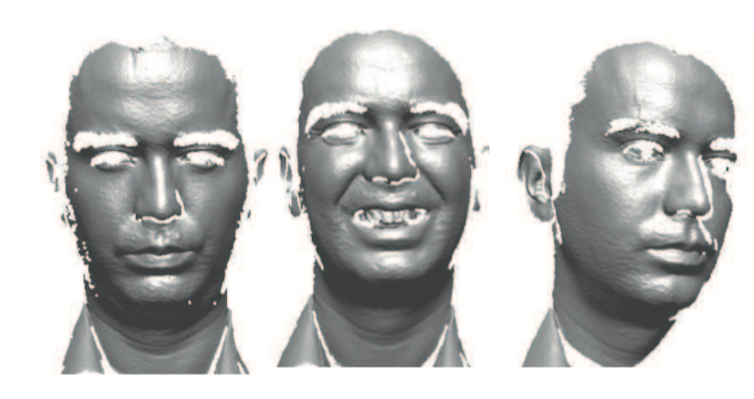

Yet the face is not only a 2D image; it is also a three-dimensional image. Facial biometrics can be used because 3D scanning technologies can scan faces. The major advantage of using 3D in this context is that the facial recognition algorithms are resistant to changes in lighting and pose. Recent work published in 2013 by our team at IMT Lille Douai in the journal IEEE TPAMI, “3D face recognition under expressions, occlusions, and pose variations” showed the advantage of this process. In this article, we proposed to compare two 3D faces by comparing two sets of curves that locally represent the shape of a 3D face. We obtained a recognition rate of 97% (using the testing framework Face Recognition Grand Challenge). The results obtained from several international tests reveal the advantages of 3D faces in facial biometrics systems.

Example of 3D faces captured by the Minolta scanner using laser technology.

Now let us get back to the iPhone X and its 3D technology for facial recognition. A feat made possible by the introduction of miniature 3D sensors on the front of the device: a projector sends 30,000 invisible points onto the user’s face, which are used to create a 3D model of the face. According to Apple, Face ID cannot be fooled by a mere photograph of a face, since the recognition is achieved with a 3D sensor that measures depth.

Mohamed Daoudi, Professor at the IMT Lille Douai, Lille Center of Research in Computer Science, Signal and Automatic Control, IMT Lille Douai – Mines-Télécom Institute

The original version of this article was published in French on The Conversation.

Trackbacks & Pingbacks

[…] original French version of this article was translated to English by the Institut […]

Leave a Reply

Want to join the discussion?Feel free to contribute!