Human-robot collaboration: Industrial utopia or tomorrow’s reality?

In the factories of the future, robots will not replace humans, but instead assist them. Researchers Sotiris Manitsaris from Mines ParisTech and Patrick Hénaff from Mines Nancy, are currently working on a control system design based on artificial intelligence, which can be used by all types of robot. But what is the aim of this type of AI? This technology aims to identify human actions and adapt to the pace of machine operators in an industrial context. Above all, knowledge about humans and their movement is the key to successful collaboration with machines.

This article is part of our dossier “Far from fantasy: the AI technologies which really affect us.”

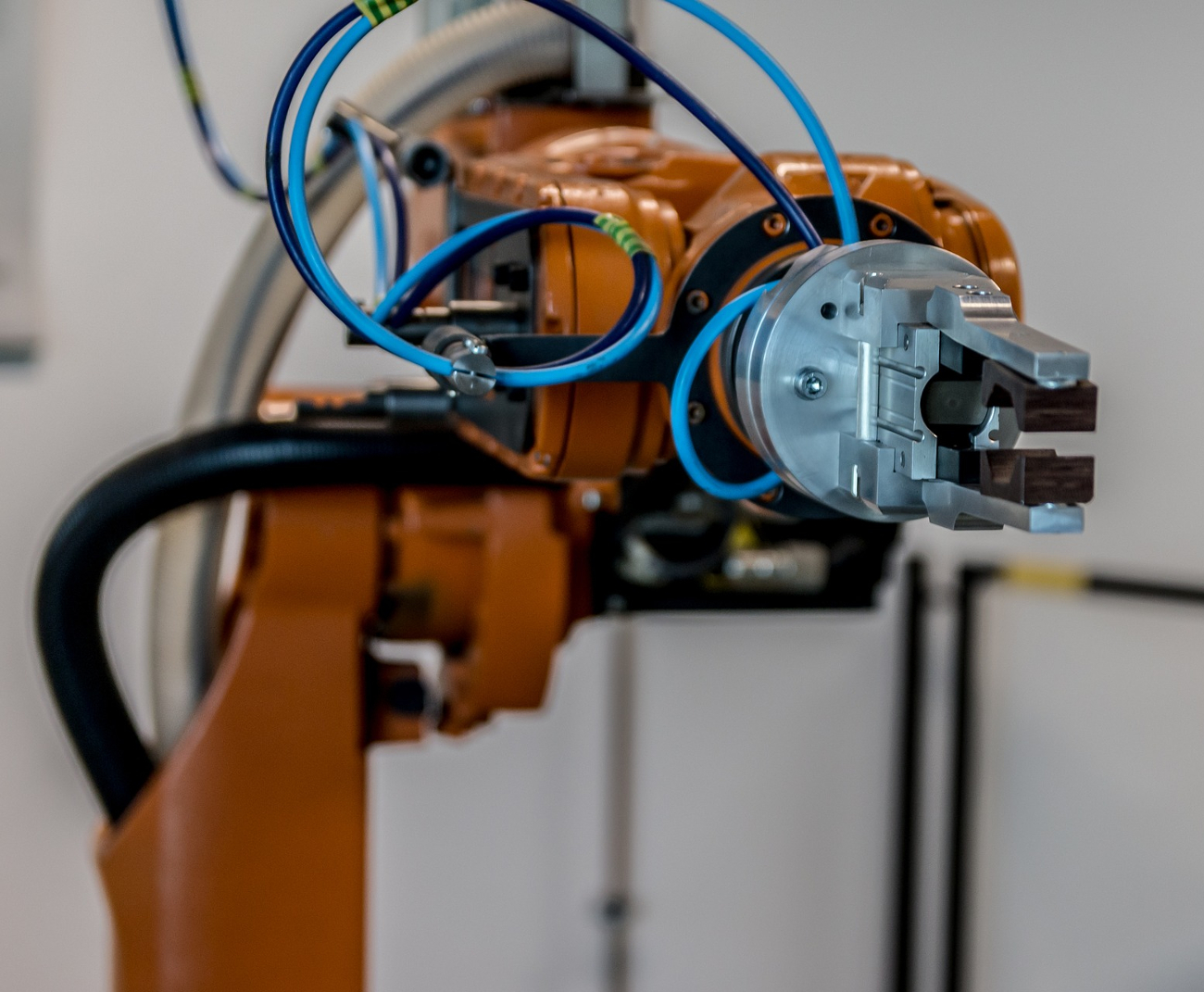

A robotic arm scrubs the bottom of a tank in perfect synchronization with the human hand next to it. They’re moving at the same pace; a rhythm which is dictated by the way the operator moves. Sometimes fast, then slightly slower, this harmony of movement is achieved through the artificial intelligence that is installed in the anthropomorphic robot. In the neighboring production area, a self-driving vehicle dances around the factory. It dodges every obstacle in its way, until it delivers the parts that it’s transporting to a manager on the production line. In impeccable timing, the human operator retrieves the parts and then, once they have finished assembling them, leaves them on the transport tray of the small vehicle, which sets off immediately. During its journey, the machine passes several production areas, where humans and robots carry out their jobs “hand-in-hand”.

Even though anthropomorphic robots like robotic arms or small self-driving vehicles are already being used in some factories, they are not yet capable of collaborating with humans in this way. Currently, robot manufacturers pre-integrate sensors and algorithms into the device. However, their future interactions with humans are not considered during their development. “At the moment, there are a lot of situations where human operators and robots work side-by-side in factories, but don’t interact a lot together. This is because robots don’t understand humans when they’re in contact with them,” explains Sotiris Manitsaris, a specialized researcher in collaborative robotics at Mines ParisTech.

Human-robot collaboration, or cobotization, is an emerging field in robotics which redefines the function of robots as working “with” and not “instead of” humans. By neutralizing human and robotic weaknesses with the assets of the other, this approach allows factory productivity to increase, whilst still retaining jobs. The human workers bring flexibility, dexterity and decision making, whilst the robots bring efficiency, speed and precision. But to be able to collaborate properly, robots have to be flexible, interactive and, above all, intelligent. “Robotics is the tangible aspect of artificial intelligence. It allows AI to act on the outside world with a constant perception-action loop. Without this, the robot would not be able to operate,” says Patrick Hénaff, specialist in bio-inspired artificial intelligence at Mines Nancy. From the automotive to the fashion and luxury goods industries, all sectors are interested in integrating robotic collaboration.

Towards a Successful Partnership Focused on Human Action.

Beyond direct interaction between humans and machines, the entire production cycle could become more flexible. This would depend more on the operator’s pace and the way that they work. “The robot has to respond to the needs of humans but also anticipate their behavior. This allows them to adapt dynamically,” explains Sotiris Manitsaris. For example, on an assembly line in the automotive industry, each task is carried out in a specific time. If the robot anticipates the operator’s movements, then it can also adapt to their speed. This issue has been the focus of work with PSA Peugeot Citroën as part of the chair in Robotics and Virtual Reality at Mines ParisTech. So far, researchers have been able to put in place the first promising human-robot collaborations. In this collaboration, which took place on a work bench, a robot brought parts depending on the execution speed of the operator. The operator then assembled them and screwed them together, before giving them back to the robot.

Read on I’MTech: The future of production systems, between customization and sustainable development

Another aim of cobotics is to alleviate human operators of difficult tasks. As part of the Horizon 2020 Framework launched at the end of 2018, Sotiris Manitsaris has tackled the development of ergonomic gesture recognition technologies and the communication of this information to robots. To do this, first of all, the gestures are recorded with the help of communicating objects (smart watch, smart phone, etc.) which the operator wears. The gestures are then learned by artificial intelligence. These new models of collaboration, which are centered around humans and their actions, are conceptualized so they can be implemented on any robotic model. From now on, once the movement is recognized, the question is knowing what information to communicate to the robot. This is so it can adapt its behavior without affecting its own performance, nor the performance of the human collaborator.

Rhythmic Collaboration

Understanding movements and implementing them in robots is also central to the work conducted by Patrick Hénaff. His latest work uses an approach inspired by neurobiology and is based on knowledge of animal motor systems. “We can consider artificial intelligence as being made up of a high-level structure, the brain, and of lower-level intelligence which can be dedicated to movement control without needing to receive higher-level information permanently,” Hénaff explains. More particularly, this research deals with rhythmic gestures, in other words, with automatic movements which are not ordered by our brain. Instead, these gestures are commanded by our neural networks, located in our spinal cord. For example, in instances such as walking, or wiping a surface with a sponge.

Once the action is initiated by the brain, a rhythmic movement occurs naturally and at a pace which is dictated by our morphology. However, it has been demonstrated that for some of these gestures, the human body is able to synchronize naturally with external (visual or aural) signals which are equally rhythmic. For example, this happens when two people walk together. “In our algorithms, we try to determine which external signals we need to integrate into our equations. This is so that a machine synchronizes with either humans or its environment when it carries out a rhythmic gesture,” describes Patrick Hénaff.

From the Laboratory to the Factory: Only One Step.

In the laboratory, researchers have demonstrated that robots can carry out rhythmic tasks without physical contact. With the help of a camera, a robot observes the hand gestures of a person who is saying hello and can then reproduce it at the same pace and synchronize itself with the gesture. The experiments were also carried out on an interaction with contact – a handshake. The robot learns the way to hold a human hand and synchronizes its movement with the person opposite them.

In an industrial setting, an operator carries out numerous rhythmic gestures, such as sawing a pipe, scrubbing the bottom of a tank, or even polishing a surface. To carry out tasks in cooperation with an operator, the robot has to be able to reproduce its movements. For example, if a robot saws a pipe with a human, then the machine must adapt its rhythm so that it does not cause musculoskeletal disorders. “We have just launched a partnership with a factory in order to carry out a proof of concept. This will demonstrate that new generation robots can carry out, in a professional environment, a rhythmic task which doesn’t need a precise trajectory but in which the final result is correct,” describes Patrick Hénaff. Now, researchers want to tackle dangerous environments and the most arduous tasks for operators, not with the aim of replacing them, but helping them work “hand-in-hand”.

Article written for I’MTech by Anaïs Culot

Leave a Reply

Want to join the discussion?Feel free to contribute!